Do you know cement concrete is the world’s most consumed man-made construction material? Yes! After water it’s the most consumed material on the universe and all is just because of the unique physical properties of cement.

So without wasting our time lets jump straight to know what the physical properties of cement are that makes it a popular construction material?

Cement as Popular Construction Material

Cement is readily available binding material throughout the world of construction. Although this article is just about the physical properties of cement but there are its chemical properties as well that combines well to make it a popular construction material. Because of its fire proof durable natural and re-usability, there is no doubt that cement can keep up its popularity in years to come.

The CO2 emissions per tonne of concrete have already dropped by 18% and modern sustainable guidelines can help further reduce the impact of cement production on our environment.

Physical Properties of cement

In a broader scope, the properties of cement are divided into two types; physical properties and chemical properties. These properties vary because of its composition i.e. 4 basic components of cement. The chemical composition of cement is defined by:

- Tri-calcium Silicate

- Di-Calcium Silicate

- Tri-Calcium Aluminate

- Tetra-Calcium Alumino Ferite

These components are also termed as Bogue’s compounds of cement.

The physical properties of cement like color, fineness, consistency, and strength etc. all depends on the above mentioned constituents of Portland cement.

[su_box title=”DID you know why color of ordinary Portland cement is grey?” box_color=”#4be97f”]Actually the grey color of cement comes from iron content and mainly C4AF (tetra-calcium aluminofrite) is responsible for the grey color of ordinary Portland cement. For architectural and esthetics applications white Portland cement is also available. WOPC has higher degree of whiteness that is achieved by substantial modification of manufacturing method and is the reason why it is more expensive than grey cement. [/su_box]

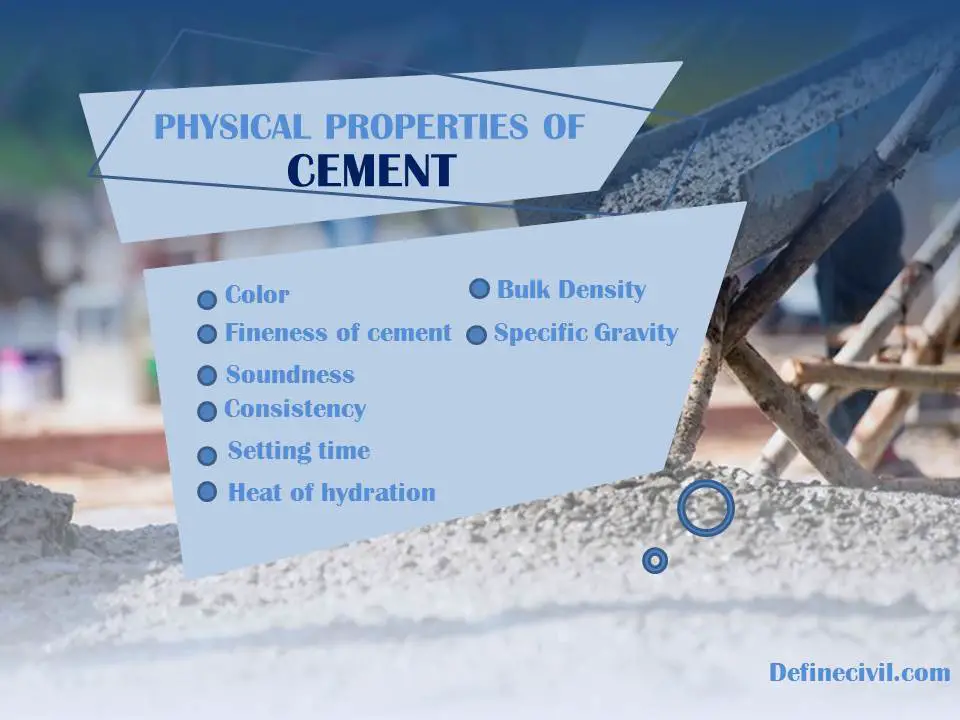

Impeccable Physical Properties of Portland cement

Here is a complete list of important physical properties of cement:

- Color

- Fineness of cement

- Soundness

- Consistency

- Setting time

- Heat of hydration

- Bulk density

- Specific gravity (Relative density)

So, let’s discuss each one by one.

Color

I’ve already explained prior that cement has a grey color because of C4AF. But you know what; C4AF mineral is actually of brown color. So theoretically, if ever we have a pure C4AF the cement would be manufactured as brown.

But as these minerals “never” exist in pure form and as cement is made using “natural minerals” so never expect to have such a color. Because C4AF is contaminated by magnesium that why we have that greyish tint of cement paste.

So the official color of cement greenish-gray because of the amount of light absorbed by the cement powder. Now you might be wondering from where do those tones of colors come in cement. Actually, as per your aesthetic requirements you can add pigments in cement concrete to achieve any desired color.

Fineness of cement

As the word “fineness” implies, this physical property of cement defines its quality and superiority. Fineness is defined and determined by the particle size of cement. Cement with finer particles is superior to that of a coarser particle.

Fineness of cement is determined in laboratory by sieving sample through a 90 µm sieve. According to Indian standard, the weight of cement retained on the standard 90 µm sieve shall never exceed 10%. The hydration rate of cement is directly related with the fineness of cement. Coarser cement requires more time for hydration then finer cements and similarly strength of finer cement is more. The bleeding, on the other hand, reduces by increasing fineness.

[su_box title=”Don’t Forget to Read this article” style=”glass” box_color=”#f64420″]Learn more about fineness of cement and how it impacts workability of concrete. Fineness of cement [/su_box]

Soundness

Soundness is an imperative property of cement that defines its ability to withstand any change in volume after setting and hardening. Because you pour concrete in well-defined dimensions of structural elements as per designed drawings, so it is required to sustain its volume. By “change in volume”, I mean both decrease and increase. The unsoundness of cement is caused by excess in lime or magnesia content that produces free CaO and MgO.

The soundness test of cement is carried out in the laboratory by Le-Chatelier Method (IS:5514) or Autoclave method. According to the Indian standard the soundness index determined as a result of Le-Chatelier’s apparatus must not exceed 10 mm.

Consistency

Another reason for popularity of concrete is its ability to flow. This ability is defined by a term called “consistency”. The consistency of cement defines the amount of water that is required to be added to produce a cement paste of standard consistency.

Actually the word “Standard consistency” is derived from a theoretical definition which is the consistency of a cement paste that allows the Vicat plunger to penetrate to a depth of 5 to 7 mm from the bottom of the Vicat mould.

Because we want to pour concrete in various shapes of formwork so we want a plastic or rather flowable paste of cement that is workable without having any segregation or bleeding. You can’t expect a cement to be workable if it doesn’t have a standard consistency. The concrete strength lies heavily on its consistency as well.

The consistency of cement is determined by Vicat’s Apparatus standardized by Indian Standard IS:5513-1976. Typical values are in the range of 26 to 33% but it depends on your project requirements. If the cement doesn’t have consistency in this range there might be a problem with the fineness or some chemical properties.

The consistency gives you a water content that is required for the initial setting of cement paste. The setting time of cement also depends on consistency of cement.

[su_box title=”Do you know?” box_color=”#4be97f”]The standard consistency is sometimes also referred as normal consistency.[/su_box]

Setting time

The setting of cement is actually a cycle of chemical reaction in which the cement components react chemically with water. The cement upon adding with water hydrates and changes from a plastic state to a hardened state. The time that is required for this reaction to complete is called setting time of cement.

To help in field operations and material handling, the setting time is further delineated into two times:

- Initial setting time

- Final setting time

These are actually theoretical terms and have theoretical definitions. For easy understanding, we can say that initial setting time is the time required for cement to hydrate and partially lose its plasticity. The final setting time is the time required by cement paste to lose its plasticity wholly.

Determining the setting time of cement is very important because the time concrete is batched and is poured at site there might be a distance to cover. This is especially important for readily mixed concrete wherein you actually have to travel the transit mixer to a distance of kilometers.

The initial setting time is important consideration while its handling process where final setting time is important in terms of formwork removal process.

Setting time of cement is determined by Vicat’s apparatus as per IS 4031 or ASTM C 191 standard. The needles to determine initial and final setting time are different. The needle for initial setting time is of 50 mm length with 1.13 mm dia with flat end whereas the needle for final setting time has a needle of length 30 mm but has a circular cutting edge which is 5 mm in diameter.

The setting time of cement varies by the type and grade but in most of cases the initial setting time is 30 minutes while final setting time is 600 minutes ( 10 hours).

Heat of hydration

In simple words, heat of hydration is defined as the amount of heat generated as a result of exothermic chemical reaction between water and ordinary Portland cement. Heat of hydration depends on chemical composition of cement like amount of C3S and C3A along with the water-cement ratio. The fineness and curing temperature is also a factor to consider.

It is very critical to determine heat of hydration because in mass concrete structures like dam there might be non-uniform thermal shrinkage causing cracking and disintegration of structure. As water is required to carry out hydration reaction so it is very important that continuous curing of concrete by any suitable method be carried out till initial setting.

Bulk Density & Specific gravity

For ordinary Portland cement, the density of cement is taken as 3.15 g/cc. According to ASTM C188, the density of cement is defined as mass of unit volume of solids. The density of cement is an important consideration in design and control of contcrete mixtures.

The density of cement is determined by a graduated Le Chatelier flask apparatus. You should be careful when speaking about density because it is different for cement and concrete. The density of PCC concrete is 2400 Kg/m3 while for RCC it varies between 2500 – 2600 Kg/m3.

The bottom line

So, I’ve tried to explain all the physical properties of cement for a better understanding. If you want to further investigate these properties please discuss in the comment section below.

[su_box title=”Stay Updated” style=”glass” box_color=”#f64420″]For civil engineering updates and construction articles you can join our whatsapp broadcast by sending a message your name at

+923479231525

Please don’t forget to share this article with your friends and subscribe via email to stay updated about physical properties of cement mortar and physical properties of cement paste.[/su_box]